In this paper, we have created and anaysed the Euclid Morphology Challenge, to help the Euclid Consortium in chosing the best software package for the galaxy catalogue production. In this challenge, we asked five galaxy fitting experts to tun their software (DeepLeGATo, Morfometryka, SourceXtractor++, Galapagos-2, and Profit) on a common dataset that we have simulated. They had to predict the Sérsic parameters (flux, half-light radius, Sérsic index and ellipticity) for four different type of images: singe Sérsic, double Sérsic and more realistic simulations (produced by a FVAE, see below). We analysed this complex dataset thanks to three metrics, namely the bias, the dispersion and the outlier fraction. We found a lot of interesting features and comparison, and I describe some of them below.

We first show that most of the codes achieve no major bias and a dispersion below 10% for all single Sérsic parameters. Nevertheless, we identified and anaysed specific behaviours for the dofferent softwares. For example, DeepLeGATo perfoms better for faint objects than bright ones! One could try to use it for faint objets while user ine of the other for bright objects.

In this paper, we simulate realistic galaxies with a Deep Learning model called Flow Variational AutoEncoder (FVAE). The galaxies are learn from real space images, by the Hubble Space Telescope. In addition to the realsitic morphologies learn by the VAE, we also have a good control of the parameters (size, ellipticity and sersic index) thanks to a trained regressive Flow. We used our simulation to forecast the number of interesting galaxies for galaxy science that Euclid will be able to resove. We also created a benchmark to evaluate the relative speed of our model compared to classical simulations.

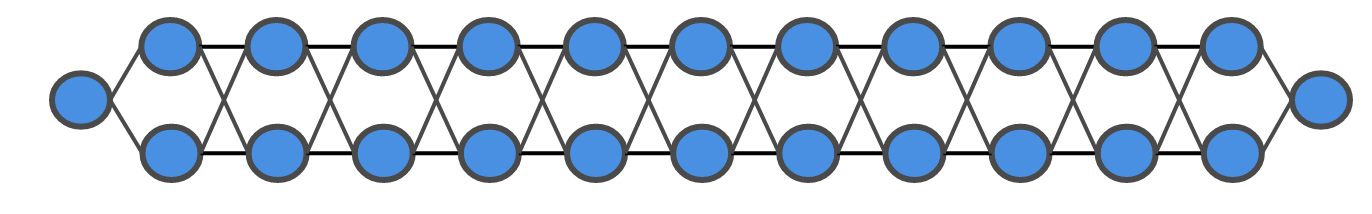

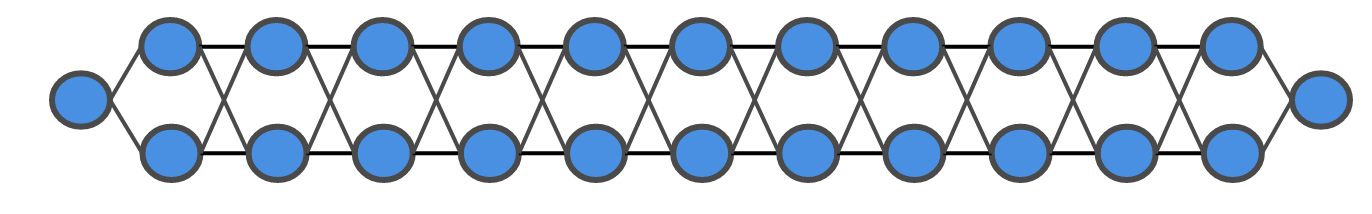

In this on-going work published (and refereed) for the Conference on Neural Information Processing Systems (NeurIPS), we explore the use of the probabilistic version of the U-Net (PUnet), recently proposed by Kohl et al. (2018), and adapt it to automate the segmentation of galaxies for large photometric surveys. We focus especially on the probabilistic segmentation of overlapping galaxies, also known as blending. We show that, even when training with a single ground truth per input sample, the model manages to properly capture a pixel-wise uncertainty on the segmentation map. Such uncertainty can then be propagated further down the analysis of the galaxy properties

created with

Website Builder Software .